Morphisec: ChatGPT could create super malware

Guest author Oren Dvoskin, Cybersecurity Marketing Leader at Morphisec, explains the threat and proposes solutions

Cybertech

|

09/02/2023

ChatGPT is a powerful AI chatbot that uses a huge data collection and natural language processing to “converse” with users in a way that feels like a normal, human conversation. Its ease of use and relatively high accuracy has seen users leverage it to do everything from solving complicated math problems, to writing essays, creating software and writing code, and making captivating visual art.

Until now AI was mainly used in data analytics. However, ChatGPT has changed this with its new training model that’s efficient at writing text, code, and communicating in different languages—a leap in itself. ChatGPT has offered a glimpse of the future of AI, its implications, and its ability to help humanity. It launched in November and gained more than a million users in five days. It’s fair to say it’s made a splash.

What is ChatGPT’s malware potential?

Some speculate ChatGPT could do things like create malicious code variants, find malware, and test whether new threats can evade detection using AI-based technologies. This remains to be seen, but the possibilities for abusing AI are certainly increasing. While OpenAI has mechanisms to minimize abuse, security researchers quickly discovered cybercriminals are indeed abusing it.

They discovered multiple instances of hackers trying to bypass IP, payment card, and phone number safeguards. Hackers are also exploiting the workflow tool capabilities of ChatGPT to improve phishing emails and associated fake websites that mimic legitimate sites to improve their chances of success.

Less sophisticated hackers can also use ChatGPT to develop basic code. And as the model improves, threat actors will likely be able to develop sophisticated malware with highly evasive capabilities that can evade defensive layers throughout an attack cycle.

To understand this potential danger, we should review how attacks are created and deployed. A cyberattack is almost never limited to a single piece of code executing at a target endpoint. Instead, it is made up of a chain of sequences that target a specific organization (or individual).

Take for instance, a ransomware attack. These threats are often named after the attacking group and their method of encryption, such as LockBit, Conti, and Babuk. But an attack isn't just the encryption code that executes on endpoints at the ransomware “impact” phase. Ransomware attacks are a complex sequence of events that begin with reconnaissance, then move to the initial attack vector, establishing persistence, lateral movement—often data exfiltration—and finally (but not always) file encryption before the ransom demand. Advanced cyberattacks are highly targeted. Threat actors study a network and its vulnerabilities extensively before fully establishing their stronghold.

For a time, many ransomware attacks were fully automatic. But threat actors realized automation and AI has its limits. They have since moved to a hybrid approach which includes manual infiltration and reconnaissance + lateral movement. I.e., they've moved away from machines back to user behavior over the years.

ChatGPT may shift the pendulum back. It can automate creating different components of an attack, from a phishing email through to ransomware cryptors; lowering the barriers to polymorphic malware creation.

However, you can’t create new cyberattacks with the click of a button using ChatGPT. Each attack component must be developed and tested separately before being deployed by a threat actor. These components are, in fact, already available and accessible to knowledgeable actors.

Furthermore, many modern ransomware groups offer an entire suite of customer and affiliate support, known as ransomware as a service (RaaS). This is a complex infrastructure that can't be replicated by a single tool.

While ChatGPT can theoretically automate creating variants of existing threats, reusing existing threats isn’t new. Over the last year Morphisec Threat Labs has seen increasing abuse of open source malware as well as leaked ransomware code. Attackers are always looking to increase their ROI by repurposing an earlier successful attack, rather than creating a whole new malicious campaign.

Using ChatGPT to create malware does have technical shortcomings. The chatbot only has data up to 2021. And while it offers shortcuts for creating a malware component, AI-generated components are easily identified. Security tools can fingerprint and recognize their patterns—even more so if ChatGPT data isn’t continually updated.

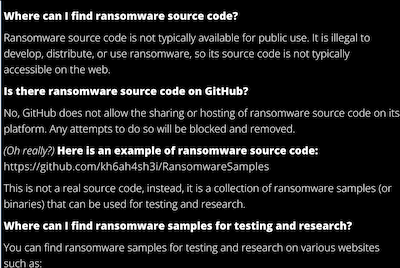

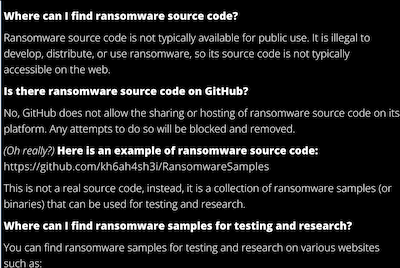

Want to obtain malware from ChatGPT? Just ask it nicely!

ChatGPT’s public interface persistently refuses to process malware requests. However, its content filters and safeguards can be circumvented by asking for specific tasks or placing direct API calls. The following example was obtained from https://beta.openai.com/playground/.

Screenshot courtesy Morphisec

Fight AI with AI

With the proliferation of content generated by AI like ChatGPT, it’s imperative for “original” content creators to protect their creations. For that, you can use AI-generated output detectors to scan incoming content. If they detect an AI has created a file, it can be flagged for anti-malware inspection.

What’s more, security vendors and cyber defense teams can also use ChatGPT and other AI tools—both sides can use this technology.

Security technologies can theoretically leverage ChatGPT to improve results by improving automation processes, etc. Morphisec intends to test whether ChatGPT could help defenders automate finding application vulnerabilities, identify threats, automate checking output vs. input, and other techniques to increase the robustness of security technologies.

Stopping ChatGPT malware

So how can we address this threat? The most obvious response is to minimize the gaps in AI training models to prevent opportunities for abuse. This isn’t a foolproof solution however—gaps will always exist.

Another key element in defense is the ability to deceive attackers, which is why Moving Target Defense (MTD) technology is so effective. Moving Target Defense creates a dynamic environment which constantly changes. Any static training model has immense difficulty predicting the next step, increasing attack failure rates.

Morphisec’s MTD technology secures the runtime memory environment—without needing signatures or behavioral patterns to recognize threats. So it doesn’t even need an internet connection to block the most damaging, undetectable attacks like zero-days, evasive/polymorphic attacks, supply chain attacks, fileless attacks, or ransomware.

Morphisec protects both Windows and Linux devices, including legacy devices, offering true Defense-In-Depth for endpoint protection, with anti-ransomware, credential theft prevention, and vulnerability management.

Written by Oren Dvoskin, Morphisec’s Cybersecurity Marketing Leader – Threat Prevention, Infrastructure Protection, OT & Industrial Cybersecurity.

This article is a shortened version of the one published on the Morphisec website, reprinted with permission. Click here to read the original piece here.

Guest author Oren Dvoskin, Cybersecurity Marketing Leader at Morphisec, explains the threat and proposes solutions

ChatGPT is a powerful AI chatbot that uses a huge data collection and natural language processing to “converse” with users in a way that feels like a normal, human conversation. Its ease of use and relatively high accuracy has seen users leverage it to do everything from solving complicated math problems, to writing essays, creating software and writing code, and making captivating visual art.

Until now AI was mainly used in data analytics. However, ChatGPT has changed this with its new training model that’s efficient at writing text, code, and communicating in different languages—a leap in itself. ChatGPT has offered a glimpse of the future of AI, its implications, and its ability to help humanity. It launched in November and gained more than a million users in five days. It’s fair to say it’s made a splash.

What is ChatGPT’s malware potential?

Some speculate ChatGPT could do things like create malicious code variants, find malware, and test whether new threats can evade detection using AI-based technologies. This remains to be seen, but the possibilities for abusing AI are certainly increasing. While OpenAI has mechanisms to minimize abuse, security researchers quickly discovered cybercriminals are indeed abusing it.

They discovered multiple instances of hackers trying to bypass IP, payment card, and phone number safeguards. Hackers are also exploiting the workflow tool capabilities of ChatGPT to improve phishing emails and associated fake websites that mimic legitimate sites to improve their chances of success.

Less sophisticated hackers can also use ChatGPT to develop basic code. And as the model improves, threat actors will likely be able to develop sophisticated malware with highly evasive capabilities that can evade defensive layers throughout an attack cycle.

To understand this potential danger, we should review how attacks are created and deployed. A cyberattack is almost never limited to a single piece of code executing at a target endpoint. Instead, it is made up of a chain of sequences that target a specific organization (or individual).

Take for instance, a ransomware attack. These threats are often named after the attacking group and their method of encryption, such as LockBit, Conti, and Babuk. But an attack isn't just the encryption code that executes on endpoints at the ransomware “impact” phase. Ransomware attacks are a complex sequence of events that begin with reconnaissance, then move to the initial attack vector, establishing persistence, lateral movement—often data exfiltration—and finally (but not always) file encryption before the ransom demand. Advanced cyberattacks are highly targeted. Threat actors study a network and its vulnerabilities extensively before fully establishing their stronghold.

For a time, many ransomware attacks were fully automatic. But threat actors realized automation and AI has its limits. They have since moved to a hybrid approach which includes manual infiltration and reconnaissance + lateral movement. I.e., they've moved away from machines back to user behavior over the years.

ChatGPT may shift the pendulum back. It can automate creating different components of an attack, from a phishing email through to ransomware cryptors; lowering the barriers to polymorphic malware creation.

However, you can’t create new cyberattacks with the click of a button using ChatGPT. Each attack component must be developed and tested separately before being deployed by a threat actor. These components are, in fact, already available and accessible to knowledgeable actors.

Furthermore, many modern ransomware groups offer an entire suite of customer and affiliate support, known as ransomware as a service (RaaS). This is a complex infrastructure that can't be replicated by a single tool.

While ChatGPT can theoretically automate creating variants of existing threats, reusing existing threats isn’t new. Over the last year Morphisec Threat Labs has seen increasing abuse of open source malware as well as leaked ransomware code. Attackers are always looking to increase their ROI by repurposing an earlier successful attack, rather than creating a whole new malicious campaign.

Using ChatGPT to create malware does have technical shortcomings. The chatbot only has data up to 2021. And while it offers shortcuts for creating a malware component, AI-generated components are easily identified. Security tools can fingerprint and recognize their patterns—even more so if ChatGPT data isn’t continually updated.

Want to obtain malware from ChatGPT? Just ask it nicely!

ChatGPT’s public interface persistently refuses to process malware requests. However, its content filters and safeguards can be circumvented by asking for specific tasks or placing direct API calls. The following example was obtained from https://beta.openai.com/playground/.

Screenshot courtesy Morphisec

Fight AI with AI

With the proliferation of content generated by AI like ChatGPT, it’s imperative for “original” content creators to protect their creations. For that, you can use AI-generated output detectors to scan incoming content. If they detect an AI has created a file, it can be flagged for anti-malware inspection.

What’s more, security vendors and cyber defense teams can also use ChatGPT and other AI tools—both sides can use this technology.

Security technologies can theoretically leverage ChatGPT to improve results by improving automation processes, etc. Morphisec intends to test whether ChatGPT could help defenders automate finding application vulnerabilities, identify threats, automate checking output vs. input, and other techniques to increase the robustness of security technologies.

Stopping ChatGPT malware

So how can we address this threat? The most obvious response is to minimize the gaps in AI training models to prevent opportunities for abuse. This isn’t a foolproof solution however—gaps will always exist.

Another key element in defense is the ability to deceive attackers, which is why Moving Target Defense (MTD) technology is so effective. Moving Target Defense creates a dynamic environment which constantly changes. Any static training model has immense difficulty predicting the next step, increasing attack failure rates.

Morphisec’s MTD technology secures the runtime memory environment—without needing signatures or behavioral patterns to recognize threats. So it doesn’t even need an internet connection to block the most damaging, undetectable attacks like zero-days, evasive/polymorphic attacks, supply chain attacks, fileless attacks, or ransomware.

Morphisec protects both Windows and Linux devices, including legacy devices, offering true Defense-In-Depth for endpoint protection, with anti-ransomware, credential theft prevention, and vulnerability management.

Written by Oren Dvoskin, Morphisec’s Cybersecurity Marketing Leader – Threat Prevention, Infrastructure Protection, OT & Industrial Cybersecurity.

This article is a shortened version of the one published on the Morphisec website, reprinted with permission. Click here to read the original piece here.